Yesterday (7th February 2023) Microsoft officially announced that they are soon to launch AI-powered versions of Bing and Edge which claim to be more powerful than ChatGTP. These systems will utilise OpenAi’s large language model wrapped in Microsoft’s Prometheus model (a safety system to control and filter out inappropriate results).

This news comes as no surprise seeing as Microsoft recently announced a new £10 billion investment into phase three of its partnership with OpenAI. It’s also hot on the heels of the launch of ChatGTP last year that is currently taking the world by storm. Some are saying that AI presents the highest risk in decades to search engines of today.

How does it work?

You can test a preview right now on Bing.com. Simply type into the box or chat with the search engine and it generates relevant answers for you. It is very easy to use. If you choose to chat with the bot it will speak back to you, sometimes just saying ‘here is what I found’ and sometimes giving a short vocal answer.

We tried to have a chat exchange with the preview version but it didn’t seem to remember what we had asked before, instead generating new search results based on the last vocal prompt. But this is just a preview with very limited capabilities. It turns out that you only get access to 'new Bing' if you join the waiting list, so we expect to see more exciting developments in time.

Can I download the latest version of Edge now?

The full launch hasn’t happened yet, but you can download the Dev channel version of Microsoft Edge here. To request access the latest features you need to click on the Bing logo in the top right corner and ask to join the waiting list. Microsoft claims this waiting period can be sped up by making Edge your default browser and setting MSN as your homepage. Features are currently limited on the desktop version. You can also download the app but you'll still be in the queue for access to the new Bing.

So, although we can’t fully test the new app, it claims to offer a load of new features. We’ll have to wait for our access to be granted to test it properly. Watch this space!

What is different about the new Microsoft Edge?

To summarise, Microsoft claims that its OpenAI powered browser can give you:

- A better search experience that lets you easily refine your search to get the best answers.

- Complete answers from summarising web results from a simple query to instructions on how to do something, saving time on scrolling through irrelevant results.

- Content generation from writing an email to creating a quiz. Results will include citations so you can do extra research yourself if needed.

- Edge Sidebar features such as Chat and Compose which claims to be able to write summaries of articles, social media posts and so on. Again it will include links to source information so you can clarify the origin of the information.

Chat and Compose will be interesting to test to see what it can do for tasks such as writing long form content, something ChatGTP excels in. We're expecting it to be better, seeing as its AI capabilities are supposed to be much more advanced than GTP-3 and 3.5.

What’s all the fuss about?

AI tools have been around for some time in many different forms such as Alexa and Siri but the advent of AI powered internet search is indicative of a new era in the way people use the internet.

What’s Google doing?

Only a day earlier (6th February 2023), Google announced the imminent launch of its own AI powered search, Bard. This model has been made available to a selected group of early testers. It is powered by its own AI service LaMDA (Language Model for Dialogue Applications).

It seems Google is a bit late to the party since ChatGTP went viral. This is especially true considering the existential threat AI search poses to its business model.

Google is currently the number one search engine and its entire business model is based on search and display advertising revenue. A new way of searching the web could see the demise of the great search engine in its current form unless Google can keep up with the times. We’ll have to wait and see what Bard has to offer.

It's rumoured that Google’s delay in launching an AI search model is down to the fact that Google wanted to ensure it was completely safe to use. We can’t really blame them since Blake Lemoine, a Google engineer, claimed that the technology had become sentient!

What is ChatGTP?

If you haven’t heard about ChatGTP by now, your head must have been in the sand. ChatGPT is an artificial intelligence developed by OpenAI that has gone viral and is making a buzz for various reasons, both good and bad.

It will be very interesting to see if the new OpenAI powered Edge will blow ChatGTP out of the water. If it really is as good as it’s billed to be, we could see a huge swing of users converting to Edge over Google Chrome in the future, unless Google’s Bard has the ‘edge’ to compete. See what we did there?

How does ChatGTP work?

ChatGTP is a fine-tuned member of OpenAI’s family of GTP-3 large language models trained using Reinforcement Learning from Human Feedback (RLHF). It works in a conversational way and responds to the tasks asked of it in just a few seconds. Its use is quite exhaustive. You can ask it to write a text commentary, poems and even code!

The chatbot research release was launched on the 30th December 2022 as a free trial and its popularity quickly gained in momentum across the globe. As a result, people have been using it for all manner of things from writing essays to mass production of blog articles. Some have even suggested that it will replace Google!

Now we know that the new Microsoft Edge and Bing is powered by OpenAI, it's got us thinking that the soft launch of ChatGTP has fed the system with an unimaginable amount of search data that will help refine Microsoft's OpenAI powered system further.

Concerns about ChatGTP

You may have seen in the newspapers, some students have used this artificial intelligence to cheat in exams. This understandably raises serious concerns across the education sector.

People are also worried that mass creation of AI generated content will pollute the web making genuine human written content harder to find.

The prospect of the introduction of watermarking in the future may be the only way to stop students from using AI tools and deter content creators from using it. There's currently a huge discussion surrounding whether search engines will devalue AI content or not.

We have been keeping a close eye on the developments surrounding AI generated content and have had a play with ChatGTP ourselves. Check out the results.

Putting ChatGTP to the test

In all honesty, it’s quite fun to type in a command and see ChatGTP generate content from a single prompt. Just sit back and watch it do all the work. If you want to cover more detail or different scenarios then all you need to do is expand on your instructions.

We put this AI tool to the test for a number of different tasks.

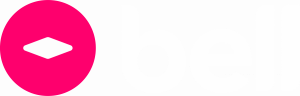

Task 1: Creative team briefing

Applied in the media field, it turns out that some tedious tasks can be automated. This can help speed up productivity in the workplace. Here’s what we asked:

As you can see, ChatGTP generated this lovely guide for us with dimensions for ad copy. Very useful indeed.

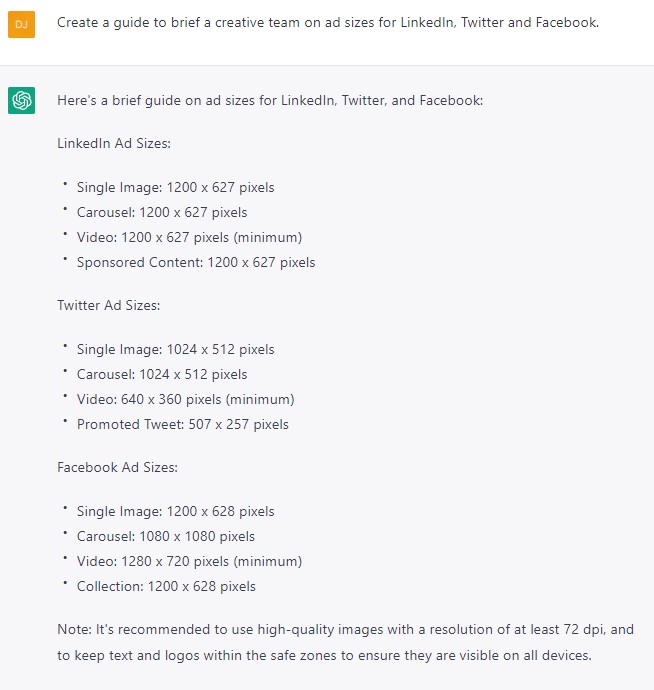

Task 2: Ad copy generation

We wanted to see how good the tool was at creating catchy ad titles. See the results below.

In the click of a button, the tool generated these ad titles that look like they could have been written by a real human being.

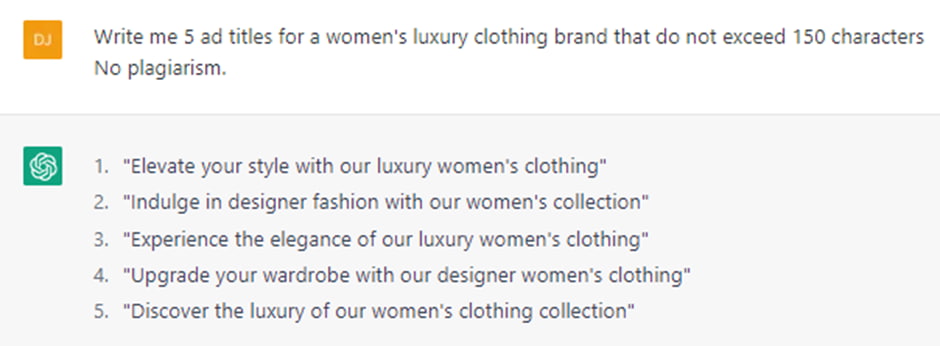

Task 3: Blog writing

People rave about ChatGTP’s amazing ability to write blogs. So we put it to the test to write an article on Performance Max ad campaigns. We also asked it to make sure the article passed any AI detection. We did this because there’s an argument that AI generated content may be frowned upon by search engines, potentially causing deindexing issues on pages with AI content, along with loss of trust and rank.

Unfortunately, the AI detector we used did pick up that the content was AI generated, so humans win, this time around.

Not only this, but we ran this article past the Head of Paid here at Bell to get her opinion on it. She informed us that some points raised in the document were factually incorrect.

So, although it might be easy to type in a prompt and make a cuppa while the chatbot does all the hard work, you really shouldn’t rely on the quality of the output or you might find yourself in a sticky situation!

Concerns surrounding the use of large language models

Love it or hate it, there are huge concerns over the future of AI generated content.

Firstly, widespread misuse of AI tools in the education sector could devalue education as a whole and shake the education system to the core.

There is also a fear that AI content will flood the internet, resulting in huge amounts of artificially generated content and the potential for factually incorrect information becoming rife.

Content creators are fearing for their jobs - will they become redundant in these futuristic times?

Not only this, but existing AI detectors can sometimes flag human written content as being artificially generated, muddying the waters even further.

But perhaps most concerning of all, is the potential for AI to be used to generate disinformation campaigns. OpenAI are acutely aware of the risks and have collaborated with disinformation researchers, policy analysts and machine learning experts to investigate how large language models have the potential to be misused for disinformation.

OpenAI says:

“Our bottom-line judgment is that language models will be useful for propagandists and will likely transform online influence operations. Even if the most advanced models are kept private or controlled through application programming interface (API) access, propagandists will likely gravitate towards open-source alternatives and nation states may invest in the technology themselves.”

The prospect of misuse is scary. You can read the full report here.

AI Watermarking

Due to the concerns surrounding widespread use of large language models, OpenAI, the creators of ChatGTP, is considering ways to introduce a watermarking feature.

Scott Anderson, a professor in computer science was hired as a guest researcher at OpenAI to research and develop watermarking technology. During a lecture on AI safety, he said:

“My main project so far has been a tool for statistically watermarking the outputs of a text model like GPT. Basically, whenever GPT generates some long text, we want there to be an otherwise unnoticeable secret signal in its choices of words, which you can use to prove later that, yes, this came from GPT…watermarking would simultaneously attack most misuses.”

So, how does watermarking work?

Scott explains in his YouTube lecture that each input and output in GTP is a string of tokens. These are made from words, punctuation and so on. There are around 100,000 tokens in total and GTP continually generates a probability distribution over the next token to be generated. He explains that to create a watermark:

“instead of selecting the next token randomly, the idea will be to select it pseudorandomly, using a cryptographic pseudorandom function, whose key is known only to OpenAI.”

However, Scott freely admits that this technology could be defeated by using another AI tool to paraphrase the output, but that sub editing the content manually by moving sentences around or deleting words should keep the watermark intact.

Conclusion

The advent of AI search could revolutionise the way we search for information on the internet. We are experiencing the dawn of a new era in search technology with the announcements of OpenAI powered Edge and Google’s Bard. Only time will tell where it will take us.

Will the internet morph so much that when looking back in ten years time good old fashioned search and websites will seem like a distant memory?

The prospect of widespread AI content is a hard pill to swallow for some, while others embrace this technology and its insane ability to regurgitate information at lightning-fast speeds.

As we have seen, AI can speed up workflow across a range of activities, from searching the web to writing long articles, and helping with pay-per-click ad copy which can only be a bonus, right?

From a content creation point of view, human written content is the way to go. Afterall, humans are fountain of real life knowledge, while AI content is scraped from the web and recycled to form new content. Another reason to err on the side of caution by choosing human content over AI is because of the uncertainty around the potential for search engines to downgrade website rankings or refuse to index AI content should they ever clamp down on artificially generated content appearing in SERPs. However, with the future of search being powered by AI, it seems hypocritical to assume AI content (if detectable) will be discriminated against.

As we don’t know what will happen with regards to search engines’ views on AI content and whether watermarking will work or not, it’s probably best practice not to use unedited AI output for mass content creation right now. At Bell, we're advocates for human content production as we feel AI output lacks emotion and a human touch. Although, that's not to say that these tools shouldn’t be used as a starting point in the creation process to help generate research to use in an article.

Output should be fact checked and thought of as an ideas bank on which to form genuine human written content. So, using AI for content, whether ChatGTP, the new Edge or Bing or any other similar AI tool, does have some advantages right now.

The advent of watermarking AI generated content could make people re-evaluate its use, but it seems a universal watermark is a long way off. Who knows what the outcome will be!

If your business needs help to navigate the future of search or if you’re looking for human content writers to produce unique articles to promote your business, get in touch for a chat.