Next month, July 1st 2024, to be exact, Google is removing access to your old Google Analytics interface and deleting all your old UA data, leaving you with just your GA4 data.

This means that if you want to keep any of your data for Year-on-Year comparisons, or any of the valuable insights from your old marketing efforts, you only have this month to back that data up for future reference and informed decision-making.

You have various options, but sadly using the old Google Analytics interface to run reports is not one of them, you will need to extract the data you want to save.

Your options are:

1. Export your Data directly from the old Google Analytics UA interface.

2. Export from the old Google Analytics UA using Custom Reports.

3. Use the Google Sheets “Google Analytics Add-on” to extract your Data.

4. Use Google Analytics Query Explorer

5. Use the Google Analytics UA APIs.

6. Export Google Analytics UA data to BigQuery

7. Backing up using Supermetrics

Some of these are easy, whilst some require a level of technical experience, but whichever path you choose, it is worth backing up at least your main KPIs to have seasonal historical benchmarks.

For now, we will just cover the first three “easy” options to back up your UA data.

Exporting Data Directly from UA

One of the easiest methods to extract Google Analytics data is to export it to CSV format or Google Sheets directly from the GA user panel.

To export the data, follow these steps:

1. Select the report and date range you want to export (be aware that UA used data sampling a lot more for longer date ranges, so it is better to export smaller date ranges rather than a 2-year date range, then join them all up later).

2. Next, click on the "Export data" option at the top right-hand corner.

3. Select the file format from the drop-down menu, CSV, Excel (xlsx), PDF, or easiest is to directly export to Google Sheets.

4. I would create a new Gmail account (companyname-ua-backups@gmail.com or something) since this will get you its own associated Google Drive space to back up to.

5. While this is an easy way to export data, it does have its limits:

• You can't apply more than 2 dimensions

• Limit of 5000 rows maximum

• The issue of data sampling if your date range is too wide

Export Data Directly from UA (Custom Reports)

Alternatively, you can also export raw GA data from the custom reports section in the GA panel.

After creating a new custom report, all you need to do is add dimensions, metrics and filters to the report.

Then you will be able to fetch the data from the report by selecting the report and choosing the extract option, top right-hand corner.

You will be able to add up to 5 dimensions this way, and can also add or remove metrics as per your requirements.

You still have the limitations of data sampling and row limits as above.

Using the Google Sheets add-on for Analytics

This method is also useful for exporting GA4 data to your Google Sheets, but for now we will look at Google UA data export.

The Google documentation for this method is here.

And choose the Google Analytics Add-on.

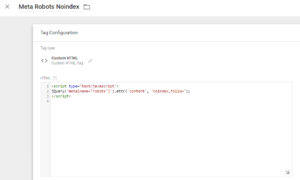

Then to use, just create a new report as shown below:

And then from the box that appears on the right select the Analytics account, Property, and View you want to get data from and select some Configuration options.

You then need to configure the report, in this case just the start and end dates, but there are plenty of options.

Then to run the report you’ve just created select “Add-ons” > “Google Analytics” > “Run Reports” from the menu bar.

This will create a new tab with your report data.

You can see from this that you can configure multiple reports to be run, and you can also Schedule your reports to run automatically, however, since we are just backing up data, I would just do a tab per month.

It's done!

If you need help or are interested in hearing more about our Analytics and SEO services, do not hesitate to get in contact.

You can also follow us on Twitter and Facebook for the latest updates.